Week 3!

Dad Joke

- What did the French man say when he went down the slide?

- Yeeesss!

Housekeeping

- Ideas for projects due today

- Feedback within the next few days

- Pep talk :)

- It’s OK to be lost

- The class is front-loaded; we will slow down

- Move catch up week?

Concepts I want to discuss

- Importing

- Comments in code

Concepts I want to discuss

- Debugging

- print()

- intermediate outputs

- KISS

- Simple functions

- Understandable variable names

Other concepts that are confusing?

Code Challenge Review

Paper discussion

Analyzing large datasets is different

- Often trace data is complete*

- Doesn’t suffer from sampling bias

- But lots of other biases:

- Sampled population != population of interest

- Reliant on systems / APIs produced by others

- Bugs in code

- Construct validity

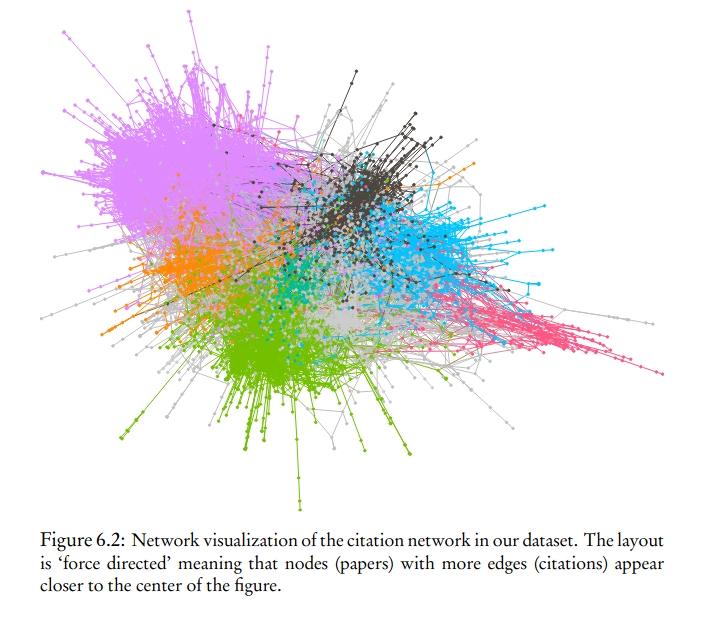

Network analysis

- Turn relationships into networks

- Visual representations can be confusing

- Useful when relationships are important part of understanding a process

Text analysis

- Lots of different approaches

- Dictionary

- Sentiment analysis

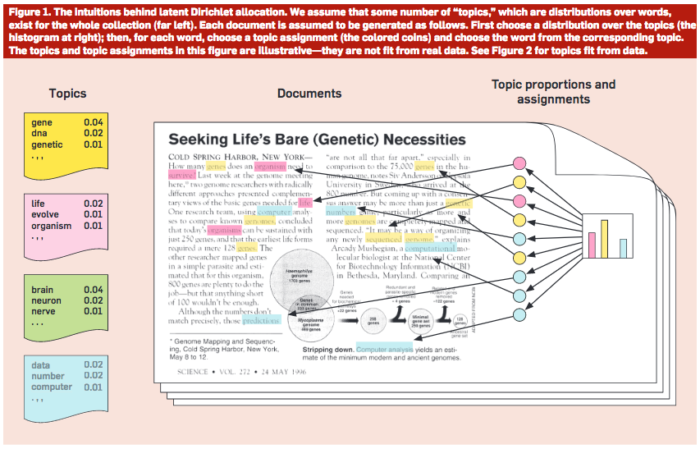

Topic modeling

- Goal is to find a set of k topics where each topic is made up of a combination of terms and each document is made up of a combination of topics.

- Requires a lot of interpretation

- Computational grounded theory (Nelson, 2017) is one attempt to get “best of both worlds”

Prediction vs inference

- Differences in focus

- Good at predicting outcomes or understanding the process that leads to outcomes

- Prediction approaches

- Training data and test data

- Cross-validation and overfitting

- Cares less about interpretability of the model or connection to theory

- Some of these can (and should!) be used by social scientists.

Reproducibility

- Code and data can be shared really easily

- Replicate this paper?

Comments and functions